Facebook’s 2025 spam crackdown is here, and it’s not just another algorithm tweak. It’s a full-court press against spammy content, artificial engagement, and impersonation—aimed at restoring authentic creator reach on the world’s biggest social network. If you’re a marketing leader, agency director, or content strategist, this update defines what still works (and what lands you in Facebook jail) for 2025 and beyond.

Understanding Facebook’s New Spam Detection AI

Meta’s revamped AI now polices the 2025 Facebook algorithm to detect and penalize accounts sharing spammy content at scale. Unlike earlier years, the crackdown relies on:

- Behavioral signals (excessive, irrelevant hashtags; repetitive content posted across many accounts)

- AI-powered analysis of captions (downranking posts with misaligned or distracting text)

- Rapid network detection—flagging coordinated fake engagement faster than ever

Why this matters: The old tricks—caption stuffing, copy-paste frenzy, or manufactured likes—now result in your content losing reach or monetization status. According to Meta’s Transparency Center, over 100 million fake pages and 23 million impersonator accounts were removed in 2024 alone, yet new detection waves are already scaling up for 2025.

Comparison: 2024 penalties were mostly post-by-post. In 2025, entire account and monetization access are at risk if patterns persist.

5 Types of Content Now Facing Stricter Moderation

Meta’s enforcement is less about intent, more about behavior. Here’s what the 2025 Facebook spam crackdown targets:

- Overly long or distracting captions

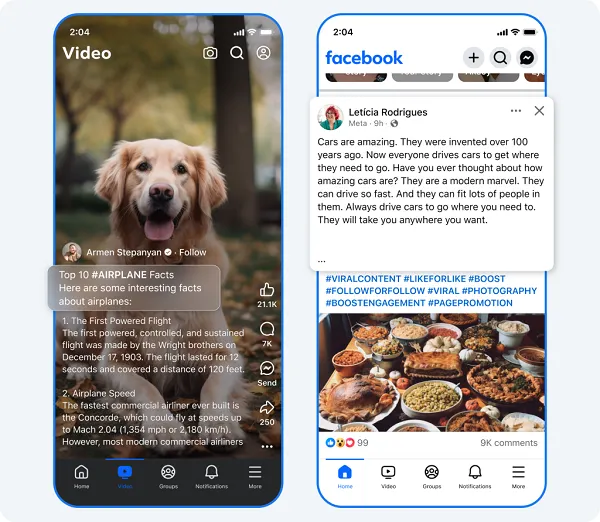

- Unrelated hashtags or unrelated text (example: puppy pic + airplane facts)

- Duplicate posts distributed across multiple accounts

- Coordinated fake engagement (bot-like comment/reaction farms)

- Impersonation of established creators/brands

Impact Alert for Marketers & Creators:

– Content flagged as spam can ONLY be seen by your current followers, with monetization suspended (key detail).

– Automated detection now partners with manual review (think human + AI).

– Elevated penalty thresholds: patterns matter more than one-offs.

As Meta explains: “Some accounts post content with long, distracting captions, often with an inordinate amount of hashtags. While others include captions that are completely unrelated to the content – think a picture of a cute dog with a caption about airplane facts. Accounts that engage in these tactics will only have their content shown to their followers and will not be eligible for monetization.”

Why do people do this?

According to some industry theories, these off-topic or lengthy captions might include extra keywords to try to manipulate algorithmic reach, or encourage longer read times (and thus, more video loops for higher engagement metrics). However, Meta’s AI increasingly detects and penalizes such behavior—even if the motivation is to game the system.

What triggers Facebook Jail in 2025?

Facebook Jail isn’t a literal cell—but algorithmic demotion and feature lockouts that stall your campaigns. The 2025 triggers include:

- Sharing identical content repeatedly across many accounts

- Using hashtag spam or misleading captions

- Engaging in (or benefiting from) organized fake engagement networks

- Impersonating other creators or brands

If your account is flagged, expect:

- Sudden, sharp drop in post reach

- Loss of access to monetization tools

- Suspensions or bans after repeat patterns

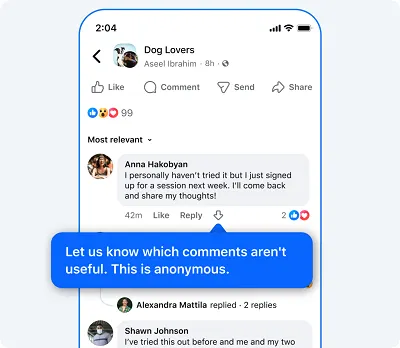

Meta is also rolling out experimental comment downvote features again in 2025, letting users downvote low-value or spammy comments to help algorithmically demote junk contributions. Although comment downvotes have been tested before (in 2018, 2020, 2021, and even on Instagram), there’s some ongoing confusion over how users apply the feature—sometimes as a reaction to disagreement rather than to flag actual spam or abuse. Still, Meta views downvotes as another tool to clean up low-quality replies.

How does Facebook detect spam accounts?

Meta’s AI system cross-references signals like:

- Simultaneous mass sharing

- Bot-like account growth

- Unnatural engagement spikes (think: sudden hundreds of likes, or identical comments across posts)

- Use of suspicious language, deceptive linking, or prompt swapping

Meta specifically notes that spam networks may create hundreds of accounts specifically to distribute the same spammy content, cluttering user feeds. Detection of such coordinated activity will severely limit reach and monetization potential, and repeat offenders risk further penalties or bans.

For full enforcement stats and technical deep dive, see Meta’s Transparency spam enforcement page.

Can you monetize with restricted reach on Facebook?

If your account is flagged during the 2025 crackdown, your content is quarantined—visible only to your followers. Critically:

- Monetization eligibility is paused on flagged accounts/content

- Even if you recover reach, past flagged posts may still be ineligible

- Suspensions can now be account-wide, not just post-level

Pro tip: Audit your Page for all signals before applying for monetization or boosting spend.

How to protect original content from impersonators?

With 23 million impersonator profiles removed last year, Meta’s team now offers multiple defenses:

- Rights Manager (full guide): Submit your original work to auto-detect and block unauthorized copies

- Moderation Assist: Facebook’s comment manager flags and hides suspicious accounts imitating you in comments

- Quick Reporting: Creators can now instantly flag impersonation attempts

- Case Example: After a popular fitness creator faced repeat copycat accounts, Rights Manager notified them within 24 hours of the latest breach, and the fake page was removed.

Meta continues to push its Rights Manager tools to help creators and brands proactively tackle imposters and fakes, underscoring the importance of active IP protection strategies in 2025.

What’s considered fake engagement on Facebook?

Meta’s definition covers:

- Buying or selling likes, followers, or shares

- Coordinated comments (via group chat or bot)

- Scripted engagement (copy-paste comments, inauthentic mass reactions)

- Participate in engagement pods? Even well-meaning ones can violate Meta’s spam policy in 2025

- Automated engagement tools (if detected) result in immediate penalties

Check Meta’s anti-spam policy breakdown.

Creator Protection Tools: Rights Manager Deep Dive

Creators and brands now have upgraded safeguards against theft, impersonation, and reach sabotage:

- Automated copyright detection flags unauthorized uploads

- Custom claim settings let you choose to block, monitor, or monetize others’ uses

- Granular analytics: See who’s sharing your content—where, when, and at what volume

- Step-by-step Rights Manager setup:

- Register your content

- Choose enforcement options

- Monitor the Rights Manager dashboard for new infractions

As spam networks evolve, so do copyright abuse attempts. Rights Manager adapts with new AI and tighter integrations across Meta platforms (learn more).

Hashtag Best Practices Under Updated Algorithms

Hashtags can help or hurt in 2025:

- Keep it relevant and concise (3-5 targeted tags max)

- Avoid generic, high-volume spammy tags (#viral, #like4like)

- Captions must match post content—misalignment is an automated flag

- Focus on conversation starters, not keyword stuffing

Note: Overuse or irrelevant tags are now directly tied to reach limits or content hiding.

Recovering From Reach Restrictions: Case Studies

Case 1: Hospitality brand was flagged for repetitive weekly promos. By overhauling captions, reducing hashtags, and auditing engagement, reach improved within 2 weeks.

Case 2: DIY creator’s Page was hit after participating in engagement pods. After pausing activity, filing an appeal, and deleting suspicious posts, tools were restored in 4 weeks.

Pro recovery tips:

- Purge flagged posts

- Check Page Quality dashboard weekly

- File appeals via FB Help Center

- Shift focus to original, non-repetitive multimedia

- Monitor analytics for bounce-back signals

Future-Proofing Your Content Strategy for 2026

Here’s how marketers and creators can stay resilient (and visible) as Facebook keeps tightening the spam screws:

- Regularly review Meta’s spam policy updates

- Use Rights Manager for IP protection

- Vet hashtags and avoid patterns known to trigger penalties

- Emphasize engagement quality over volume

- Leverage Moderation Assist and reporting tools proactively

Context/Implications Box

For creators and marketers:

- Spam signals are cumulative: automation, copy/paste, and sloppy hashtags are all risk factors.

- Fast-action tools like Rights Manager are now essential, not optional.

- Less is more: one smart, well-crafted post will outrank 10 copycat attempts.

What’s Next? (Forecast & Tips)

- AI models will autonomously flag nuanced signals—expect spot-checks even on past content.

- Rights Manager to expand into short-form and cross-platform video.

- Meta to publish granular policy FAQs—bookmark these for your comms/legal teams.

- Additional integrations between Moderation Assist and third-party moderation tools expected.

Key Takeaways

- Meta’s 2025 Facebook spam crackdown targets repetitive, misleading, and inauthentic engagement at the algorithmic level.

- Monetization halts if you break policy—review content before applying pressure.

- Rights Manager and Moderation Assist offer creators/brands stronger defense against impersonation and theft.

- Smart, relevant hashtags are safe—spammy patterns aren’t.

- Check analytics and Page Quality dashboards often to catch (and reverse) penalties early.

While Meta continues to encourage the use of AI-powered creative tools, AI-generated spam remains a growing challenge on the platform. As some experts have noted, audiences can be susceptible to fake and misleading AI-generated depictions, which are not yet the central focus of Meta’s spam crackdown in 2025. Businesses and creators should remain cautious of relying too heavily on AI-driven content unless quality and authenticity are assured.